From the moment Bereket Tsegay began working as a moderator for TikTok in Kenya, a hub for social media moderation in Africa, the job felt impossible. Each shift, he was tasked with reviewing several hundred videos that had been reported for violating the platform’s guidelines. As images – many of them deeply disturbing – flashed across his screen, he had to make a split-second decision: leave it up or take it down.

Mr. Bereket was hired because he spoke Ethiopia’s lingua franca Amharic, but the videos in his queue came in dozens of African languages, most of which he didn’t know.

If he didn’t understand the audio and the visuals weren’t suspect, Mr. Bereket says he usually just left the video on the site. That is, unless many users had reported it. Then he took the video down.

Why We Wrote This

Social media moderation is always an imperfect science. But it’s especially challenging when machines and human moderators are asked to judge content in languages they don’t understand.

It wasn’t a very accurate way to judge, admits Mr. Bereket, who no longer works in the field. But “it is bound to happen … because there are never enough moderators.”

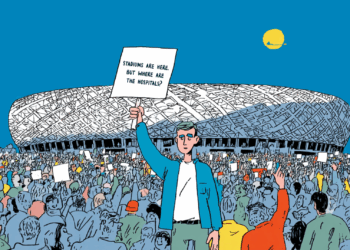

Everywhere in the world, social media moderation is an imperfect science. Machines and humans trawl through vast seas of content, making rapid subjective judgments. But the challenges are even bigger in places where both human and AI filters struggle to understand what’s being said.

“We’re talking about an algorithm, trained predominantly in English, being trusted to take down … harmful content, while a huge percentage of TikTok users in Kenya are using TikTok in their mother tongue,” says Mercy Mutemi, director of the Oversight Lab, a Kenyan legal advocacy group focused on technology.

An impossible task

The problem is not unique to Kenya. The first line of defense against harmful social media posts globally is artificial intelligence, which can be taught to flag rule-breaking content it sees or hears.

TikTok declined to comment about which languages it uses AI moderation for, but a spokesperson wrote in an email to The Christian Science Monitor that “a combination of technology and human moderation” is employed “in many languages and dialects globally and we keep increasing them as the platform grows.”

But even when it is used, AI moderation works well in only a few major global languages. In many others – dubbed “low resource languages” – researchers say the models just haven’t been fed enough training data to accurately analyze content.

In those cases, responsibility for taking down “bad” posts falls mostly to people. And often, they simply cannot keep up.

In Africa, TikTok outsources most of its content moderation to Teleperformance, a French company operating out of Nairobi. Former employees who spoke to The Christian Science Monitor say their starting salary was about $300 per month. They report that they review around 500 videos per shift – or about one every minute for nine hours straight. At that speed, they say, total accuracy is impossible.

Crowdsourced policing

Responding to this deficit, many Kenyans have begun to act as their own watchdogs. In a recent survey of Swahili-speaking social media users, some 70% said they had reported a post to the platform at least once. “Mass reporting,” where users encourage each other to flag the same account, is also common.

Pauline Onyango learned that firsthand. After graduating from high school in November 2024, she decided to pursue her dream of becoming famous on TikTok.

But months of dance routines, lip-sync clips, and comedic content yielded few new followers. Then she began posting vulgar videos about sex in her native language, Luo, and at last, the algorithm seemed to notice her.

Soon, Ms. Onyango says that she had more than 40,000 followers. “It felt really good,” she recalls. “Many people cheered me on in the comments.”

But she quickly attracted detractors, too, like popular Kenyan TikTok creator Duncan Onyonka. “Such … videos, you cannot even sit in a room where there are many people and start watching,” he says. “They will be shocked.”

So he began making videos rebuking Ms. Onyango and asking his followers to report her account, Mama Mapenzi.

“You started well on TikTok, but you’ve recently started a trend of saying words as big as the heavens,” he said in one video. “I wonder why the TikTok community has not checked how the guidelines work, leaving you to say such things as you wish.”

The plea garnered over 700,000 views and more than 1,000 comments. Other users began posting similar videos about Ms. Onyango, and last October, TikTok closed her account.

Ms. Onyango says her account being shut down was a wake-up call. Salacious content was never really “her,” she says, but rather a quick way to views and likes.

An imperfect solution

But experts say ordinary users aren’t always the best judges of what does and doesn’t belong on a social media site. For instance, they may push for content they don’t like to be taken down – even if it doesn’t actually violate the platform’s rules. And crowdsourcing moderation can also feed into harmful social norms.

For example, when researchers at Center for Democracy and Technology spoke to speakers of the south Asian language Tamil, users who identified as LGBTQ+ or who belonged to a traditionally oppressed caste frequently complained that platforms let harassment against them go unchecked. Slurs “were not flagged or taken down … despite the swift removal of words with similar connotations shared in different languages,” the CDT’s report concluded.

In a written statement, a TikTok spokesperson told the Monitor that the company doesn’t tolerate bullying, harassment, and abuse, and that it takes down 90% of offensive content before it is even reported.

But when posts get taken down erroneously, it can feed concerns about censorship. These worries are common among speakers of low-resource languages. For instance, nearly 80% of Tamil-speaking social media users polled by the CDT said they are concerned about being silenced by platforms. Two-thirds of speakers of both Swahili and the South American language Quechua fear the same.

Jackson Busolo is one of them.

One morning in February 2025, “I just woke up and found [my TikTok] account gone,” he recalls.

There was no explanation, but Mr. Busolo, who posts in Swahili, suspected it had something to do with his posts accusing Kenya’s leaders of stealing funds from public coffers.

“I ask myself, and this is a question you should be asking yourself, what is this money doing?” he says in one such video.

Since TikTok hadn’t given him a clear reason for the ban, Mr. Busolo decided to appeal, and one day, his account suddenly reappeared.

He never got an explanation for that either.