A recent dispute between the Pentagon and the artificial intelligence company Anthropic has brought long-standing tensions between national security and the ethical development of AI into the national spotlight.

It also represents the latest instance in which AI’s swift integration into business and government has collided with growing concerns that its development is leaving safety protocols behind.

In late February, Anthropic refused to allow the Pentagon unrestricted use of its technology, insisting on limitations it said were essential for safety. The Department of Defense had used the company’s technology in operations to seize Venezuelan President Nicolás Maduro in January and in the Iran strikes that began on Feb. 28.

Why We Wrote This

Artificial intelligence is developing so quickly that it’s raising questions of safety and control as the technology’s capabilities meet the demands of those, such as the federal government, who are using it.

The Pentagon responded to Anthropic’s demand by designating the company “a supply chain risk to national security.” After negotiations fell apart, Anthropic’s competitor OpenAI signed a deal with the Pentagon without the restrictions Anthropic had sought. Anthropic has sued the Trump administration, saying its action was “unlawful.”

As AI development accelerates, Congress has done little to regulate the technology, and the Trump administration has followed suit. Last year, President Donald Trump issued an executive order limiting states’ abilities to set safety guidelines. The lack of government regulation means AI companies are deciding on their own how to address safety concerns while scrambling to keep up with competitors such as China. The companies’ differing approaches to safety and guardrails could shape the future of this transformative technology.

Here’s a look at the state of AI and the companies at its forefront.

Which companies are the major players in AI?

Two of the most well-known AI companies are OpenAI and Anthropic. OpenAI was founded in 2015 by a group of entrepreneurs, including current CEO Sam Altman, Elon Musk, Greg Brockman, and Ilya Sutskever. The company is responsible for one of the most recognizable AI tools, ChatGPT, a chatbot that uses artificial intelligence to engage in conversation with users and answer their questions.

Despite his early criticism of President Trump, Mr. Altman has had a friendly relationship with the second Trump administration. OpenAI’s president, Mr. Brockman, has donated millions to a political action committee supporting Mr. Trump.

Anthropic PBC was founded in 2021 by former OpenAI employees who left that company over concerns it was prioritizing speed over safety, among other disputes. Anthropic’s signature tool, Claude, operates similarly to ChatGPT, though it is considered stronger in some areas, like coding.

Anthropic promotes itself as a more safety-conscious company than its competitors. It donates to political groups that support AI regulation and says it wants to imbue its models with moral values. However, it drew criticism in February for dropping a core promise to pause training on AI systems that power things like chatbots, AI robots, and defense systems, unless it could guarantee that risks were properly mitigated.

Anthropic CEO Dario Amodei has made bleak predictions about AI, saying it will probably cause “unusually painful” job disruption and, without proper precautions, could lead to mass death through its potential to help bad actors. But he has also said the United States must stay competitive with countries like China.

“The Anthropic folks seem very genuinely convinced that they are making decisions about the most important technology that has ever occurred in human history,” says Bill Drexel, a senior fellow at the Hudson Institute who researches AI competition with China.

What safety questions are being raised?

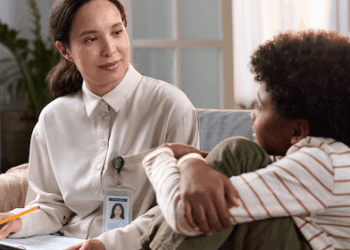

Most AI companies are addressing multiple issues around AI safety, including establishing mental health guardrails, ensuring data privacy, and preventing chatbots from inventing facts.

But whether those efforts will be successful remains in question.

Multiple lawsuits have alleged that in 2025, ChatGPT gave harmful advice to people experiencing mental health crises, including one instance that resulted in a person dying by suicide. Last year, OpenAI made updates it said were aimed at preventing such incidents.

Some states have proposed laws to establish safety parameters for how AI bots interact with children or to ensure the bots can’t provide users with information needed to commit a crime, such as instructions on how to build a chemical weapon. But an executive order from President Trump in December pressured states to back off enforcing any regulations the administration deems burdensome. That could mean states don’t enforce regulations like one in Colorado that bans algorithmic discrimination, which occurs when AI tools show bias toward certain groups based on factors like race or religion.

The Anthropic dispute also highlighted ethical questions about the military’s use of AI. For example, Anthropic did not want its technology used in conjunction with lethal weapons that could target and fire without a human at the controls.

Kay Firth-Butterfield, CEO of Good Tech Advisory LLC, says the use of such weapons raises ethical questions.

“The concept of fighting a war when nobody dies apart from civilian casualties has a moral dimension that I don’t think anybody’s discussed,” she says. “Because, you know, one of the things that stops wars is when we lose people.”

Where do companies have red lines?

Anthropic raised two major red lines in its dispute with the Pentagon. Mr. Amodei wanted a legally binding agreement from the Pentagon that would prevent it from using Anthropic’s technology to conduct domestic surveillance of Americans or to be used in lethal autonomous weapons.

Mr. Altman, OpenAI’s CEO, publicly backed Anthropic’s position that AI should not be used for the two purposes it outlined. But it still signed off on the Pentagon’s requirement that its models could be used for “all lawful purposes.” The company said it negotiated the right to put technical guardrails on its systems to ensure the military followed its safety principles.

That “all lawful purposes” language had been a stumbling block for Anthropic. Mr. Amodei said current AI systems are not up to the task of powering autonomous weapons because they lack the judgment skills of well-trained human soldiers.

In the wake of the dispute, major defense companies have issued directives to stop using Anthropic’s technologies, though many technologists in Silicon Valley have supported the company’s decision.

How is AI changing?

For most people, AI is rapidly becoming more integrated into daily life. The technology’s latest development is something called agentic AI – a machine learning model that can complete multistep tasks on people’s behalf.

An AI agent can make decisions on its own and then adapt those decisions or behavior based on a designated goal. For example, it might plan a vacation and book hotel reservations and plane tickets for someone based on that traveler’s preferences.

As these models become more advanced, experts predict they could change companies’ workflows and consumers’ experiences. Major businesses like Walmart and JPMorganChase are already using AI tools to do things like detect fraud or interact with customers.

Many companies are racing toward what they see as the next frontier in AI: something called artificial general intelligence, or AGI. This refers to technology that could, in theory, meet or surpass human intelligence. Most AI today is confined to a specific type of task, such as an AI chatbot that answers questions. If achieved, the newer AGI could apply knowledge across different subjects and adapt to new situations. Imagine a customer service bot that anticipates problems and tailors its responses, or an advanced self-driving car that adapts in real time to weather events.

A large portion of a $50 billion investment by Amazon in OpenAI could be contingent on the company achieving AGI, reports The Information, a publication covering the tech, finance, and media industries.

Experts say these new developments will raise even more questions about safety.

“Everybody’s chasing AGI, but what we don’t necessarily know is whether AGI will be safe,” says Ms. Firth-Butterfield. “We don’t know what it will be capable of doing.”