Artificial intelligence is becoming an integral part of daily life for most Americans as they interact with chatbots, read summaries of Google searches, or receive personalized recommendations from social media algorithms.

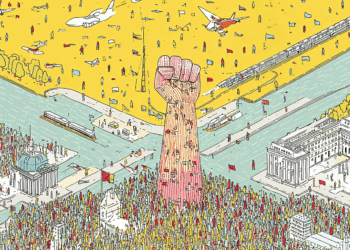

This widespread use – and the booming industry around it – is raising the question of who gets to shape the rules for the transformative technology: the federal government, which argues that too much regulation could hinder innovation, or individual states, which have begun to pass laws, primarily designed to protect AI users from things like discrimination or dangerous suggestions from chatbots.

It’s a battle playing out in the government right now. Some Republicans in Congress are reportedly trying to insert a moratorium on state AI regulations into a must-pass defense spending bill that lawmakers aim to approve by the end of the year. At the same time, President Donald Trump has drafted an executive order that would pressure states to back off enforcing any regulations the administration deems burdensome, according to news reports.

Why We Wrote This

Artificial intelligence is showing up more and more in Americans’ daily lives, raising questions about who should regulate it, and whether the focus should be on unleashing innovation or protecting the people who use AI.

“Investment in AI is helping to make the U.S. Economy the ‘HOTTEST’ in the World, but overregulation by the States is threatening to undermine this Major Growth ‘Engine,’” President Trump wrote on social media last week.

Punchbowl News reported last week that House Republicans are urging the White House to hold off on an executive order while they try to negotiate a compromise. Commerce Secretary Howard Lutnick has meanwhile told tech industry officials that the administration will act on its own if Congress isn’t making progress.

A recent Gallup poll shows that most Americans agree that the U.S. should have more advanced technologies than other countries. But 97% say AI should be subject to regulation.

Across the country, rules are taking shape. Lawmakers in all 50 states have introduced AI-related regulations, and at least 38 states have already enacted AI-related laws this year. Many of them say it’s essential to be able to respond quickly and nimbly to the ever-shifting challenges posed by AI.

“The states are the laboratories of democracy,” says Democrat James Maroney, a Connecticut state senator who sits on a steering committee for a multistate working group on AI. The group includes Republicans and Democrats. “We know the federal government just can’t act as quickly, and we know how rapidly these technologies are changing, allowing the states to regulate where they’re closer to seeing the harms.”

What states are passing

The Trump administration has previously tried to stop states from enforcing AI regulations. A draft of the Republicans’ tax-and-spending bill this past summer included a moratorium blocking states from passing such laws for 10 years. That proposed ban received considerable backlash from both Republican and Democratic governors and state officials, and it was ultimately struck down in the Senate in a 99-1 vote.

But the debate may not be over.

Republican Sen. Ted Cruz insisted at a Politico AI & Tech Summit in September that the idea of a 10-year moratorium is “not at all dead.”

As Congress and the administration weigh other ways to curb, or altogether halt, state regulations, states are moving forward. California Gov. Gavin Newsom, for one, has signed more than a dozen AI bills into law in the last two years, including a landmark transparency bill this September that requires large AI companies to disclose how they’re implementing safety protocols so that a chatbot can’t, for example, give a user instructions on how to build a chemical weapon.

New York has advanced a similar law this year. An Illinois law imposed sweeping restrictions on AI use in therapy. And Colorado and Texas have passed bills protecting people from discrimination when AI algorithms are used to make consequential decisions, such as those related to housing or employment.

Several experts interviewed by the Monitor said that they expect a significant amount of legislation in the coming year that could establish safety parameters for how bots, such as ChatGPT, interact with users, especially children.

A “patchwork of indecision”

The lawmakers passing these bills say they’re necessary to address challenges that people are already facing from AI technologies. But some experts and lawmakers fear the laws will have unintended side effects.

In a September congressional hearing, Rep. Darrell Issa of California warned of a “patchwork of indecision.” If each state is passing its own laws, he said, companies could face a tangled web of conflicting laws and regulations.

Many Republicans and technology leaders fear that sorting through these standards could create cost barriers, hindering AI innovation for businesses and developers. That would come at a time when the U.S. is closely competing with China to see who will emerge as an AI leader, with the ability to shape AI algorithms and applications.

“When you have 50 different states creating potentially 50 different sets of rules, some of which could even end up being in conflict with one another – that creates such a regulatory nightmare,” says Patrick Hedger, the director of policy at NetChoice, a trade association advocating for free enterprise on the internet.

Travis Hall, the director of state engagement for the nonprofit Center for Democracy and Technology, doesn’t see this “patchwork” argument actually playing out, at least so far.

He says many of the laws passed address specific industries, such as health care, that are already regulated under state law. And the ability to target local industries is one of the reasons he believes AI regulations should be implemented at the local level.

A recent Tennessee law called the ELVIS Act, for example, protects against the use of AI to impersonate musicians’ voices. Mr. Hall says the law makes sense for a state like Tennessee, where Elvis Presley grew up and made his home, that values its musicians.

“I have a hard time imagining a federal bill that would really cover the landscape of things that AI touches,” he says.

As states work to pass these laws, though, the process can be messy. American Enterprise Institute economist Will Rinehart points to problems with Colorado’s AI law this year as an example of states overlooking the burdens that can fall on businesses.

Although the law protecting against discrimination was signed in 2024, the state’s Democratic Gov. Jared Polis – who supported a 10-year moratorium on state regulation – responded to objections from tech groups about its potential impact by delaying implementation until June 2026. For example, under the initial law, AI developers could be held responsible if their algorithms caused discrimination, regardless of the developers’ intent.

Mr. Rinehart, for one, says he supports “the nice, big overall idea of trying to give people protection.” But “when you get into the nitty-gritty of the laws,’’ he adds, implementing a specific regulation can be “very difficult.”

Senator Maroney in Connecticut is working with lawmakers in other states who are trying to take on such challenges. Their group of over 100 state lawmakers meets twice a month to discuss bills and share resources. Senator Maroney says this idea-sharing helps maintain consistency, avoiding the patchwork problem that many fear.

“The way forward is working together,” he says.